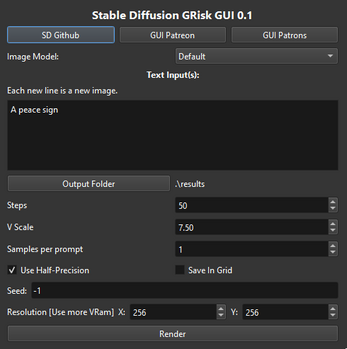

Stable Diffusion GRisk GUI 0.1

A downloadable tool for Windows

Requirement:

This project require a Nvidia Card that can run CUDA.

With a card with 4 vram, it should generate 256X512 images.

🎉 Attention! This version is highly outdated: 🎉

✨Download the last update from Patreon.✨

👉 https://www.patreon.com/DAINAPP 👈

In the Patreon version you can run:

- 512X512 with 4 Vram

- Use upscaler

- Faster render with more schedulers

- Use img2img

- Use inpainting

- Load other models

- A bunch of more options

What is this?

This is an interface to run the Stable Diffusion model.

In short: You write a text prompt and the model return you a image for each prompt.

You can read more about it here:

https://stability.ai/blog/stable-diffusion-public-release

Want some help with the prompts?

Check this site: https://lexica.art/

Running it:

Important: You should try to generate images at 512X512 for best results

A .exe to run Stable Diffusion, still super very alpha, so expect bugs.

Just open Stable Diffusion GRisk GUI.exe to start using it.

Resolution need to be multiple of 64 (64, 128, 192, 256, etc)

Read This:

Summary of the CreativeML OpenRAIL License:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. We claim no rights on the outputs you generate, you are free to use them and are accountable for their use which should not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

Please read the full license here: https://huggingface.co/spaces/CompVis/stable-diffusion-license

Important Stuff:

- It seen that some GTX 1660 cards have a problem running models at half precision (only option in this GUI for now

- Samples currently don't work, it will always generate 1 image per prompt, you can repeat the same prompt in a lot of lines for a similar effect to samples.

- The AI usually give good results at 512X512, other resolutions may affect the quality.

- More steps = Better quality, more steps don't use more memory, just more time.

- >= 150 steps is a good start.

- This error appear on the .exe startup, it always appear on 0.1, but the app should still work:

torchvision\io\image.py:13: UserWarning: Failed to load image Python extension:

torch\_jit_internal.py:751: UserWarning: Unable to retrieve source for @torch.jit._overload function: <function _DenseLayer.forward at 0x000001C305192700>.

warnings.warn(f"Unable to retrieve source for @torch.jit._overload function: {func}.")

torch\_jit_internal.py:751: UserWarning: Unable to retrieve source for @torch.jit._overload function: <function _DenseLayer.forward at 0x000001C3051A7A60>.

warnings.warn(f"Unable to retrieve source for @torch.jit._overload function: {func}.")

Comments

Log in with itch.io to leave a comment.

My video card is missing cuda divice, gpu 3070, only the cpu remains, sd gui 1.8.0

then error on startup (Detected no CUDA-capable GPUs.)

What version of stable diffusion does the Grisk Stable Diffusion GUI 0.1 run?

Does anyone know what is the default Sampling method in GRisk?

I was generating images today with this AI when I wanted to see the results it's just black pictures, when I came back here and read the "Important Stuff" section the first line that was written is about GTX 1660 cards not being able to render and unfortunately my card is a GTX 1660 Super so I guess i was unlucky.

When will you fix this ?

It's been fixed for a long time on the Patreon, the GUI on Itch is just way, WAY out of date.

What is their Patreon ?

https://www.patreon.com/DAINAPP

can someone explain how to actually paint/mark areas with inpaint? i choose a input and i choose inpaint model, what am i missing after that? all it does is render a new image.

for when amd?

whenever i try to load a .ckpt model i get this error message

Traceback (most recent call last):

File "start.py", line 1388, in OnRender

File "start.py", line 1270, in LoadModel

File "convert_original_stable_diffusion_to_diffusers.py", line 630, in Convert

File "omegaconf\omegaconf.py", line 187, in load

FileNotFoundError: [Errno 2] No such file or directory: 'D:\\Downloads\\Stable Diffusion GRisk GUI\\v1-inference.yaml'

I keep getting out of memory errors even when it's only trying to alloc like 9MB. Makes no sense. No solutions online

I ran into an issue where I can run a prompt at 100, 200, 400 and 500 iterations, but 300 iterations gives an error:

Rendering: anime screenshot wide-shot landscape with house in the apple garden, beautiful ambiance, golden hour

0it [00:00, ?it/s]

Traceback (most recent call last):

File "start.py", line 363, in OnRender

File "torch\autograd\grad_mode.py", line 27, in decorate_context

return func(*args, **kwargs)

File "diffusers\pipelines\stable_diffusion\pipeline_stable_diffusion.py", line 152, in __call__

File "diffusers\schedulers\scheduling_pndm.py", line 136, in step

File "diffusers\schedulers\scheduling_pndm.py", line 212, in step_plms

File "diffusers\schedulers\scheduling_pndm.py", line 230, in _get_prev_sample

IndexError: index 1000 is out of bounds for dimension 0 with size 1000

V Scale is 7.50, resolution is 768x512, seed: 12345, on a 3080 12GB.

Why steps are limited to 500 (even on patreon 0.52 version (also not sure if it's connected in any way but DiscoDiffusion doesn't have a limit))

Processes three random images every time alongside my query. Pretty sus.

are you sure you don't have any backspace in you text followed by nothing? Cause that creates random pics

Every time this has ever happened to me, it meant I had blank lines in the prompt, either before, or after what I actually typed.

i paid 10 dollars and i am using version 0.52 i still get black screen and i bought but no money is deducted from my card

Patreon only charge you at the end of the mouth.

If you are having this problem, is a hardware bug, you can use the float32 option as a work around, but it will require more vram

thanks for the answer yes I enabled the float32 option and got this error RuntimeError: CUDA out of memory. Tried to allocate 114.00 MiB (GPU 0; 4.00 GiB total capacity; 2.99 GiB already allocated; 0 bytes free; 3.35 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

my graphics card is gtx 1650 super

Any news since a month ago?

float16 (rather than 32) helped? max_split_size_mb:128?

Really want to know why this program doesn't go out of memory (talking about system RAM, not VRAM) compared to every other SD repo/GUI. I have only 8GB of system RAM. (Thank you for making this!)

Not sure why other GUI use that much ram, but your welcome

Dont know were to ask. Can i interface with this in any way with code. i want to try to make a private discord bot for me and my friends:)

Will it be possible to use the DALL-E2 base in future versions? Thx.

Dalle-2 don't have the source code or the model as open source, so its impossible for now

It's a pity (

I'm having this error when loading a dreambooth model:

Loading model (May take a little time.)

{'feature_extractor'} was not found in config. Values will be initialized to default values.

Traceback (most recent call last):

File "start.py", line 320, in OnRender

File "start.py", line 284, in LoadModel

File "diffusers\pipeline_utils.py", line 247, in from_pretrained

TypeError: __init__() missing 1 required positional argument: 'feature_extractor'

Dreambooth models are still not supported. Still need some time to make they work

No problem, with the ckpt converter I don't need this program anymore.

Hello, I got this error when running a 512x512 image, I'm using an nvidia 3060 ti, and 16gb of ram, any solutions?

RuntimeError: CUDA out of memory. Tried to allocate 512.00 MiB (GPU 0; 8.00 GiB total capacity; 2.56 GiB already allocated; 2.69 GiB free; 2.58 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

I dont understand, 2,69 GiB is not enough to allocate 512 MiB? xD

Thanks in advance

It should totally not use that much memory for a 512X512 image. There is anything else using the vram of the computer?

I got the patreon version, it works great! having tons of fun. Thanks for putting it together!

On my 1660 TI it just outputs black, and its not overclocked.

The free version has a bug that breaks the AI when using 16xx Nvidia cards, it's fixed on the Patreon, annoyingly.

Definitely sets off my pet peeve about devs withholding important bugfixes behind a paywall, though GRisk plans to eventually update the free one, whenever that is.

This bug is more of a hardware problem than a software one. The fix is pretty much a work around the hardware bug.

Please look into adding Dreambooth please!

Yeah, it will be incorporated in the GUI eventually

Is there a Github for this?

If you want it so bad, pay the guy a bit. Coding isn't easy, takes time and work. $10 to support good coders and programs is worth it.

That's not an acceptable response. Standard procedure for these situations is the latest version spends some time on Patreon, then released is publicly. They we're only asking a reasonable question, you dingus.

"That's not an acceptable response" says the person name calling in a response. Paying coders is a proper response if you want something early. The coder is under no obligation to release it publicly for free. So, you can either wait patiently, or pay the coder for their efforts. I respect the coder for even releasing it for free at all. When the coder is ready to put it up for free, then they will. The coder needs to make a living, so your entitlement doesn't matter.

Have a great day.

Okay, when they asked? They weren’t bitching and moaning. They were simply asking a factual question. You need to get over yourself, promptly….

You simply have a twisted worldview and think that we’re all entitled little brats or something. They were just asking when it’s going to be available. Again, stop being a dick.

Says the person again calling names, acting aggressive, and telling a person with an opinion what they and another person who you don't know were thinking.

You must want drama and argument over the Internet.

Feel free to reply with more signaling and reactionism. I'll let you win.

End of line.

The only one being a dick is you, bro, you're a sentient human, maybe grow some self-awareness.

You're acting extremely entitled, assuming that a creator has to deliver you a free product under a stringent time constraint???

Since fucking when?

What IS standard procedure, is you paying someone for their work or shutting the fuck up and being happy they're giving you anything at all for free.

Nothing better than an entitled man-child with a chip on his shoulder letting his entitlement run wild!

You are just telling that to get a bug fix, that allows 3% (according to steam, not the newest info) of every gpu owner (i am talking about gtx 16xx guys) I have to pay 10$, like, are u crazy? + he is not even a developer of stable diffusion, he just did a gui version, for just GUI app 10$, just listen to it, 10$ for a gui. And I wouldnt write this reply if I could download an original version, but i can't cuz, "you don't have visual c++, idc that you downloaded it 5 times, lol". so, better approach would be, VIP with all those features that it has, and free with only bugfixes.

well, i see only two options for me, pirate it or i fix it for myself, somehow

this is exactly why i want the patreon version

Please no fighting in the comments, if someone have any question you can always send me a private message on Patreon.

i tried but ur account doesnt show up when i search to msg ppl on patreon

I just did a test and you are right, I was under the impression that anyone could message me on Patreon, but it seen that only Patrons can. The worse is that there is no option for me to open private message to everyone, this suck.

Well, there is also my email in any case: griskai.yt@gmail.com

I think your question was wen 0.5 would appear in here? For now I don't have an answer, will keep developing it on Patreon and at some point it may appear here.

ah ok, ty for ur response

btw i only asked this cuz of the one bugfix that fixes black output images on gtx 16xx cards, which is only available in the latest version on patreon, and not on here.

its on kemono

i cant find it, send link to exact post on there

That's commonly caused by 16xx Nvidia GPUs, as far as I know. No clue what else causes it.

If you've got a 1650, 1660, whatever GPU, I'm pretty sure you're out of luck, the fix isn't enabled in the free version.

If you want to sell this, then why not simply sell it rather than go through Patreon?

There is still to much problems and lacking options to be a full product. Possible in the future.

Hey there! Will you ever make a "image input" option?

Hi there, on Patreon version is already possible. It may take some time to be public

When will it be available for linux? if it ever will?

Its a little hard since it require some code change and I need a machine running linux, but not impossible I think

Thanks! I hope you can make it possible soon!

There is no information about the tiers and access on patreon! Which tier do you have to have to access the download? Will there be any free updates here? What features does the patreon one even have? ???

Umm... the download is on this page & requires no payment.

Patreon is to support the dev & only promises early access to any potential future updates.

Wrong. Features previously available have already been blocked behind Patreon.

As wintergrey says, there is a free version on this page but that appears to be frozen at v0.1.

I believe you need to pay £8 per month to get the current version on Patreon (0.5). As you say, it isn't clear but I paid £4 then £8 - at £8 I could see the download.

Patreon only charge you at the end of the month, so you are free to test the tiers if you like without paying anything. In the future they may become free updates, but not for now.

There is already some updates on the patreon version, like better memory and img2img

Sorry but no, Patreon charges upfront for any tier so there is no free testing of tiers and they charge the first of the month no matter when you joined the month before. This is the week before the next month, paying $10 now in September and then having to pay $10 for October a week later is too soon for many people. On October 1st you will probably see an influx of patrons.

As someone who runs a little Patreon, nah.

Creators on Patreon get to choose if it's set to pay upfront, or just at the first of the month, and GRisk's Patreon is set to the first of the month. I still haven't been charged a cent since I subbed, but I have full access to the download just fine.

Someone could legitimately sub to GRisk, unsub at the end of the month and resub at the beginning of the next month and get full access for free forever, which is probably why most creators switch to the "pay upfront" mode.

Really, how did you sub to GRisk's Patreon without paying? I was charged as soon as I subscribed. Very interesting

is there a chance you will make that for linux with a .deb ?

I never experimented with linux, first would need a machine running linux as well

AMD GPUs tends to have tons of memory but well, CUDA-only applications can't take advantage of it.

Its possible to run pytorch scripts on AMD, my rife-app runs on AMD as well. But it take some extra work

What exactly do I get with Patreon? Do I need to stay subbed to get new versions? How about using the software if I'm no longer a patron? Is there a list of features, i.e. how the "paid" version differs from this free one?

You can get new downloads as long you tay subbed, you can keep using the application forever if you cancel the sub. The Patreon version already have a lot of new stuff and use less memory to run the model.

hello, how do I add more models to pull from? I have the 0.5 version btw

There is a tutorial on Patreon. If you have any question you can send me a PM via Patreon

我的電腦有2個顯卡(3070.3080),如何讓軟件充分利用所有顯卡

Translation: "My computer has 2 graphics cards (3070.3080), how can I make the software take full advantage of all graphics cards"

Good question. Never even thought of this.

is there a way to use less memory? slow it down or something. i cant even render a single 64x64 image without running out of memory

what GPU you have?

really nothing good. NVidia 940M

I thought that at least something small could be rendered with that but I guess I underestimated the program

Well if I try to generate 64x64 images, it uses all of my 3GB vRAM in my laptop. I don't know how much vRAM you have but you probably need at least more than 2GB to make it even function. But you might also need some newer features added to newer GPUs.

I can generate 320x320 images with my GTX 1050 mobile and 3 GB of vRAM, but it is still soooo slooooooooooow to generate something like a minute or so. I'm afraid you are out of luck with this kind of technology, I don't know about the Patreon version though. This software is really meant more for modern desktop GPUs where you can easily generate big images in the matter of seconds. But you can generate some images using StabilityAIs official demo here: https://huggingface.co/spaces/stabilityai/stable-diffusion

you will wait for the results a bit, but it will work. (most of the time anyway)

Btw. 64x64 will generate just some random streaks of color, at 256*256+ you can get low quality but somewhat decent images.

Patreon version use less memory. It should run with 4vram using 512x512

I only get a black image. Tryed with different prompts. Do I need to download a library to use it? If so, how do I do this, step by step?

You must have a card that don't like half precision models. In 0.51 on Patreon is should fix this

It generates a black image for all prompts and all settings, using a gtx 1650 trying to generate "Minecraft Steve" with 150 iterations at 256*256

Same for me. Trying to figure out what I'm doing wrong...

This software have some problems with utilizing half-precision using gtx 16xx GPUs. It should work with full-precision, but the free version of this gui doesn't support it. An alternative for you could be this:

NMKD Stable Diffusion GUI - AI Image Generator

I don't own 16xx GPU, so I can't really confirm, but it should work with 16xx GPUs if you set it up correctly.

Hi! I got the app to run successfully, but I'm unable to get it to utilize my better graphics card, and it keeps running off of my integrated graphics. I've tried all the layman's solutions, using the Nvidia control panel and system settings to specify which card that stable diffusion should use, but it keeps trying to allocate space in my integrated graphics card. Any help is appreciated.

Hi there. You will able to select the graphic card on 0.51, but only on patreon for now

my Ndivia MX330 2G error : RuntimeError: CUDA out of memory. Tried to allocate 2.00 MiB (GPU 0; 2.00 GiB total capacity; 1.67 GiB already allocated; 0 bytes free; 1.74 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

What solutions ?

Edit: nvm, I know what's wrong now, the software will not allow rendering images at a bigger resolution than 512x512, as soon as you try something more HD will give the error... So in conclusion, this is just a dummy testing tool. Not wort the time downloading.:::::::::::::

Getting the same error.My Nvidia RTX 2060 GDDR6 6GBBummer, Nvidia only.

GRisk, works great for me, looking forward to any updates you release. Thanks for creating this.